Ivan Skorokhodov

I am a Research Scientist at Snap Research (Creative Vision team), working on image/video/3D generative models. I obtained my PhD degree from KAUST in March 2023, where I was a part of Visual Computing Center, supervised by prof. Peter Wonka and prof. Mohamed Elhoseiny. Before that, I was a deep learning researcher at MIPT for 2 years — first, working on NLP and then, on loss landscape analysis. Before MIPT, I was a software engineer at Yandex for 1.5 years.

Selected research projects

-

Contemporary models for generating images show remarkable quality and versatility. Swayed by these advantages, the research community repurposes them to generate videos. Since video content is highly redundant, we argue that naively bringing advances of image models to the video generation domain reduces motion fidelity, visual quality and impairs scalability. In this work, we build Snap Video, a video-first model that systematically addresses these challenges. To do that, we first extend the EDM framework to take into account spatially and temporally redundant pixels and naturally support video generation. Second, we show that a U-Net - a workhorse behind image generation - scales poorly when generating videos, requiring significant computational overhead. Hence, we propose a new transformer-based architecture that trains 3.31 times faster than U-Nets (and is ~4.5 faster at inference). This allows us to efficiently train a text-to-video model with billions of parameters for the first time, reach state-of-the-art results on a number of benchmarks, and generate videos with substantially higher quality, temporal consistency, and motion complexity. The user studies showed that our model was favored by a large margin over the most recent methods.

-

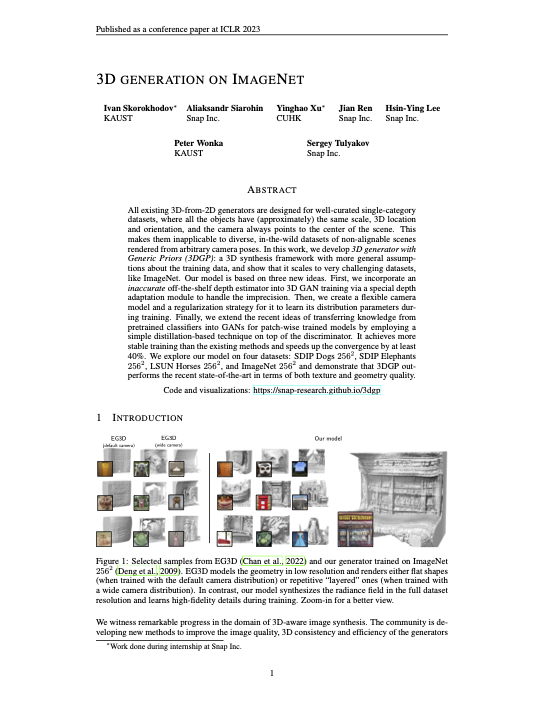

3D generation on ImageNet

ICLR 2023 (Oral)

Existing 3D-from-2D generators are typically designed for well-curated single-category datasets, where all the objects have (approximately) the same scale, 3D location and orientation. This makes them inapplicable to diverse, in-the-wild datasets of non-alignable scenes rendered from arbitrary camera poses. In this work, we develop a 3D generator with Generic Priors (3DGP): a 3D synthesis framework with more general assumptions about the training data, and show that it scales to very challenging datasets, like ImageNet. Our model is based on three new ideas: 1) using an off-the-shelf depth estimator to guide the learning of 3D geometry; 2) a flexible learnable camera generator and a regularization strategy for; and 3) knowledge distillation into the discriminator to transfer the external knowledge from a pre-trained feature extractor. We explore our model on four datasets and demonstrate that 3DGP outperforms the recent state-of-the-art in terms of both texture and geometry quality.

-

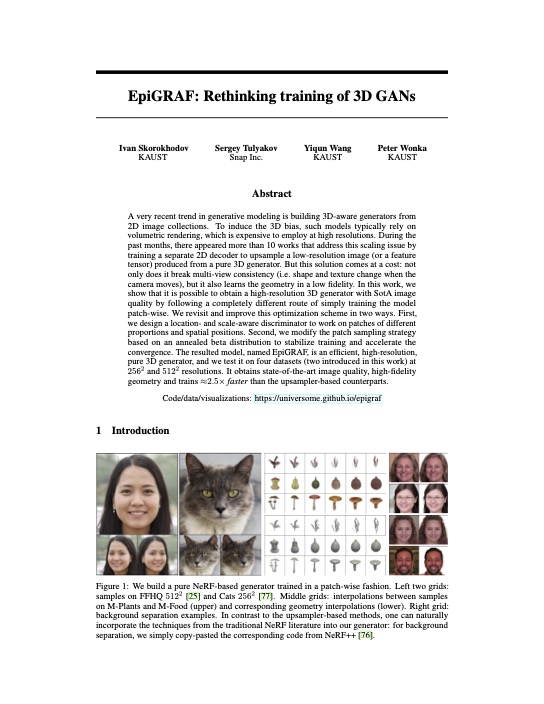

EpiGRAF: Rethinking training of 3D GANs

NeurIPS 2022

In the past several months, there appeared 10+ works that speed up NeRF-based GANs by training a separate 2D decoder to upsample a low-resolution 3D representation produced from the NeRF generator. This solution comes at a cost: it break multi-view consistency and learns the geometry in a low resolution. Instead, we show that it is possible to obtain a high-resolution 3D generator with SotA image quality by simply training the model patch-wise. We revisit and improve this optimization scheme in two ways: 1) by designing a location- and scale-aware discriminator to work on patches of different proportions and spatial positions; and 2) modifying the patch sampling strategy based on an annealed beta distribution to stabilize training and accelerate the convergence. The resulted model, named EpiGRAF, is an efficient, high-resolution, pure 3D generator, and we test it on four datasets (two introduced in this work) at \(256^2\) and \(512^2\) resolutions. It obtains state-of-the-art image quality, high-fidelity geometry and trains \({\approx} 2.5 \times\) faster than the upsampler-based counterparts.

-

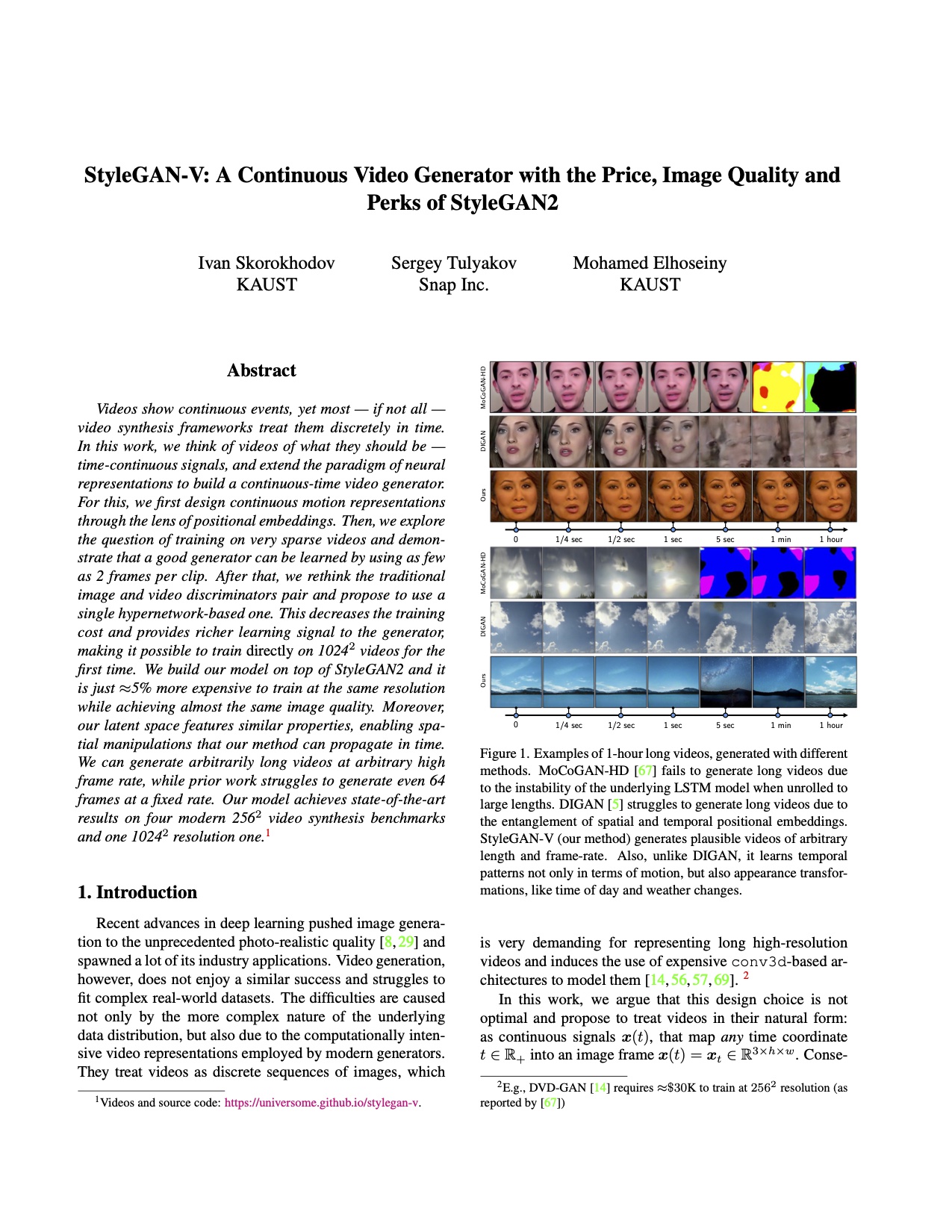

StyleGAN-V: A Continuous Video Generator with the Price, Image Quality and Perks of StyleGAN2

CVPR 2022

We build a non-autoregressive video generator which is continuous in time. It is based on StyleGAN2 and we rethink fundamental components of video synthesis models. First, we redesign the motion codes to be continuous by structuring them as acyclic positional embeddings. Then, we drop the usage of expensive Conv3d layers and aggregate the temporal information across frames by simple concatenation. Finally, we demonstrate that a state-of-the-art video generator could be trained with a very sparse sampling scheme, using just 2-3 frames per clip. Our modifications greatly improve the training efficiency of our model and we achieve strong state-of-the-art results on FaceForensics \(256^2\), Sky Timelapse \(256^2\), UCF-101 \(256^2\), Rainbow Jelly \(256^2\) and MEAD \(1024^2\). We also demonstrate the video manipulation properties of our generator, like projecting a video into its latent space using just a single frame and CLIP-based editing.

-

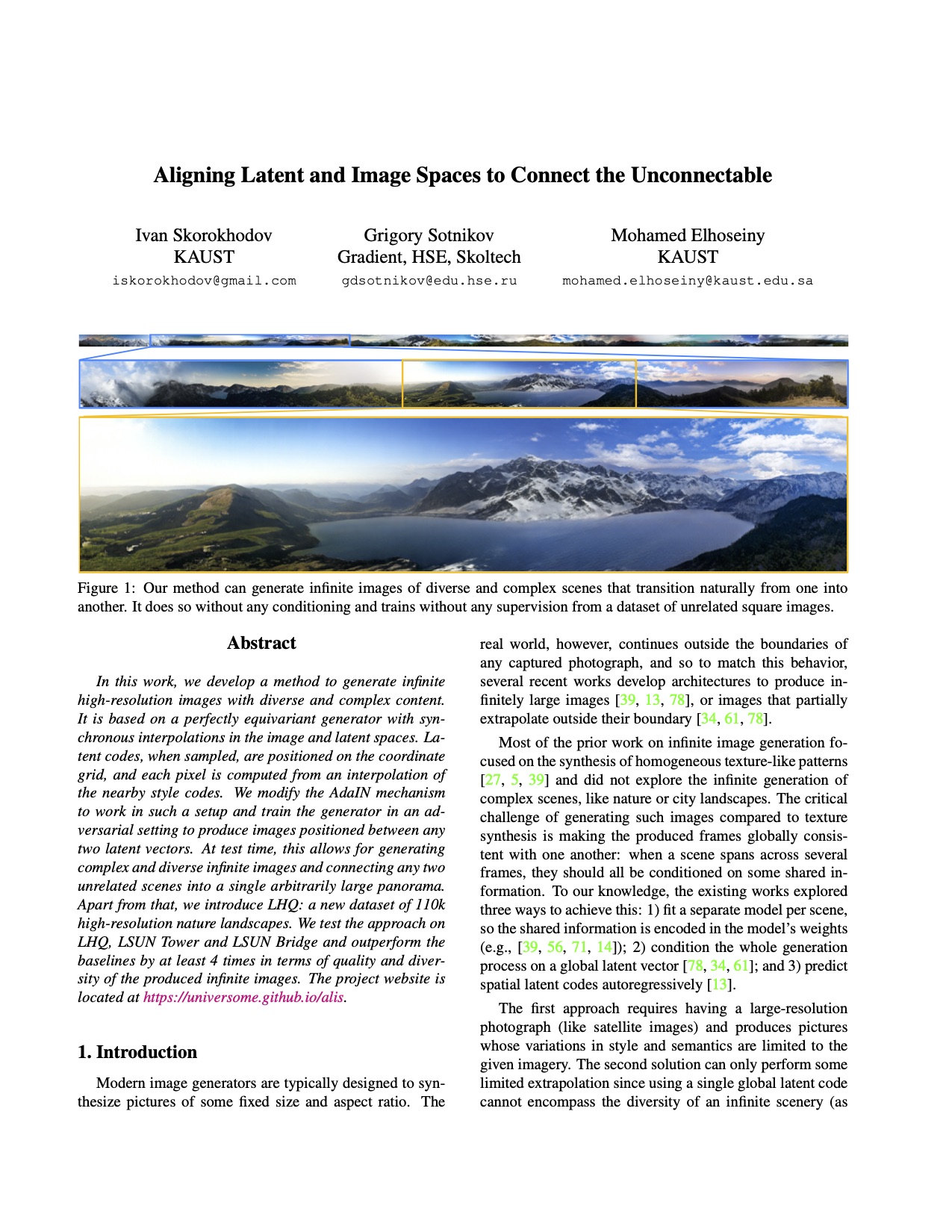

We proposed an idea of positioning GAN's latent codes on the coordinates plane. This means that each latent code, when sampled, is getting associated with an \((x,y)\)-position of the 2D image plane and our generator computes a color of a pixel from the interpolation of the neighboring latent codes (instead of just a single global one). This allows us 1) to generate images of infinite size (by generating infinitely many latent codes and positioning them on the grid); and 2) connecting unrelated frames into a single, arbitrarily large panorama.

-

We built a GAN model that generates images in the implicit neural representation (INR) form. An INR is a function \(F(c)\) which takes coordinates \(c = (x, y)\) as input and predicts a pixel value \(v = (r, g, b)\). In this way, our generator is a hypernetwork that generates parameters for \(F(c)\). We proposed two techniques to scale such a model to real-world datasets: factorized multiplicative modulation (FMM) and multi-scale INRs. We achieved decent (for INR-based models) generative quality on LSUN Churches \(256^2\), LSUN Bedrooms \(256^2\), and FFHQ \(1024^2\) and showed a lot of interesting properties of INR-based decoders. At the end of the day, our approach turned out to be very similar to StyleGAN2 with 1x1 convolutions, coordinate embeddings, and nearest neighbor upsampling.

-

Class Normalization for (Continual?) Generalized Zero-Shot Learning

ICLR 2021

In this paper, we dived into normalization techniques used in zero-shot learning (ZSL). We showed how scaled cosine similarity and attributes normalization influences signal's variance inside a model. We showed that for deeper models, there is a need for other normalization procedures and developed class normalization, which is similar to batch normalization but applied across the class dimension. Using class normalization, we built an MLP model that achieves state-of-the-art performance and trains x50-200 times faster than the current SotA. We also formulated a novel continual zero-shot learning problem and tested our approach in that setup.

-

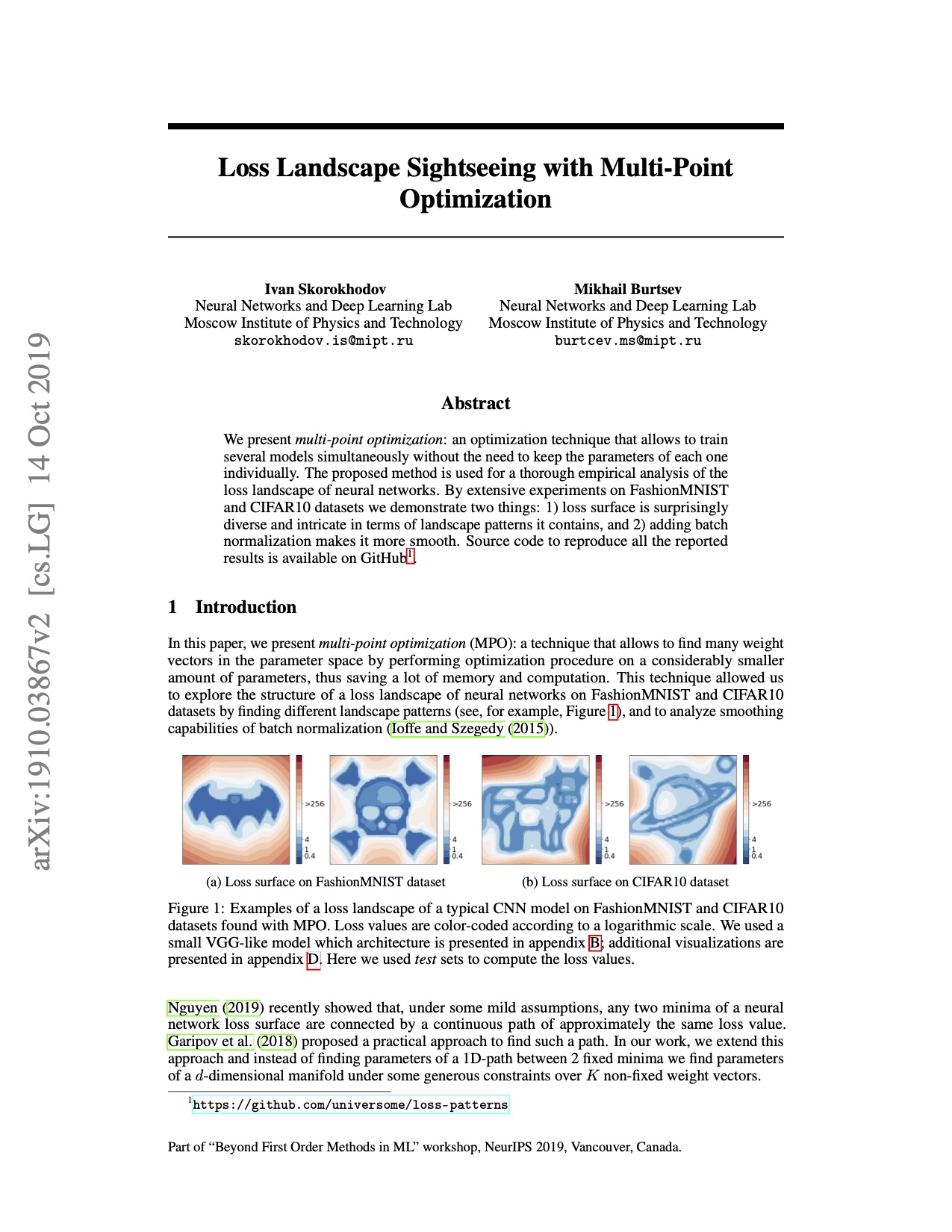

Loss Landscape Sightseeing with Multi-Point Optimization

Beyond First Order Methods in ML workshop, NeurIPS 2019

Using mode connectivity ideas, we searched loss landscapes of different neural networks for different visual patterns. Due to the extreme overparametrization, it turned out that any pattern can be found inside the surface. This indicates that the loss landscapes of deep models are very complex and contain many irregularities.

-

While the existing interpolation techniques (nearest neighbour, bilinear, Lanczos, Hamming, etc.) assume that the known points positions construct a uniform grid, it is not always the case. Moreover one would like to backpropagate through these points positions. In this project, I implemented a CUDA kernel for points interpolation on a non-uniform grid based on the Gaussian Mixture Model.

-

RtRs

- rust

RtRs is a small ray-tracing/rasterization engine written in rust. It works on both meshes and traditional quadrics and has some cool features however, like distributed RT/BVHs/arcball rotations/etc.

-

Omniplan Web App

- javasript

- react

Omniplan was extensively used at my previous work but didn't have any web interface which made everyone annoyed. So I built one using their official API.

-

Firelab

- python

- pytorch

During the past 3 years, I had been building a framework for running deep learning experiments in pytorch and using it in my research projects. It is very similar to pytorch-lightning + hydra, but without a proper documentation and testing ¯\_(ツ)_/¯

-

DL reasoner

- rust

An ALCQ description logic reasoner based on the tableau algorithm.